Composable primitives. Trace-first observability.

An open-source Python SDK for engineers building single-agent and multi-agent systems in production. Compose ReAct, Reflexion, and coordination patterns, with a built-in trace viewer.

pip install nanitics

Three primitives, one composing agent.

The full trace, no instrumentation added.

Three layers of agents. researcher is a ReAct agent with one

search tool. grounded wraps researcher in a

Reflexion loop that retries until its output clears a programmatic check.

coordinator invokes the wrapped agent as a tool and composes

the final answer.

evaluator = ProgrammaticEvaluator(

checks=[

EvaluationCheck(

name="cites_two_sources",

check=lambda out: out.count("[") >= 2,

feedback="Cite at least two sources from search().",

)

],

)

researcher = ReActAgent(

name="researcher",

llm_client=researcher_llm,

emitter=emitter,

system_prompt="Research the question using search() and cite results as [R-N].",

tools=[search],

)

grounded = ReflexionAgent(

name="grounded",

llm_client=reflection_llm,

emitter=emitter,

system_prompt="Reflect on the previous attempt and prescribe a corrected approach.",

inner_agent=researcher,

evaluator=evaluator,

episode_store=InMemoryEpisodeStore(embedding_client=MockEmbeddingClient()),

)

coordinator = ReActAgent(

name="coordinator",

llm_client=coordinator_llm,

emitter=emitter,

system_prompt="Delegate research to the grounded researcher, then synthesise the final answer.",

tools=[

AgentTool(

agent=grounded,

emitter=emitter,

caller_name="coordinator",

description="Delegate research questions to the grounded researcher.",

)

],

)

result = await coordinator.run("What changed in our retry policy last quarter?")

Swap MockLLMClient for AnthropicLLMClient(model='claude-haiku-4-5') to run against a real provider. Everything else is identical.

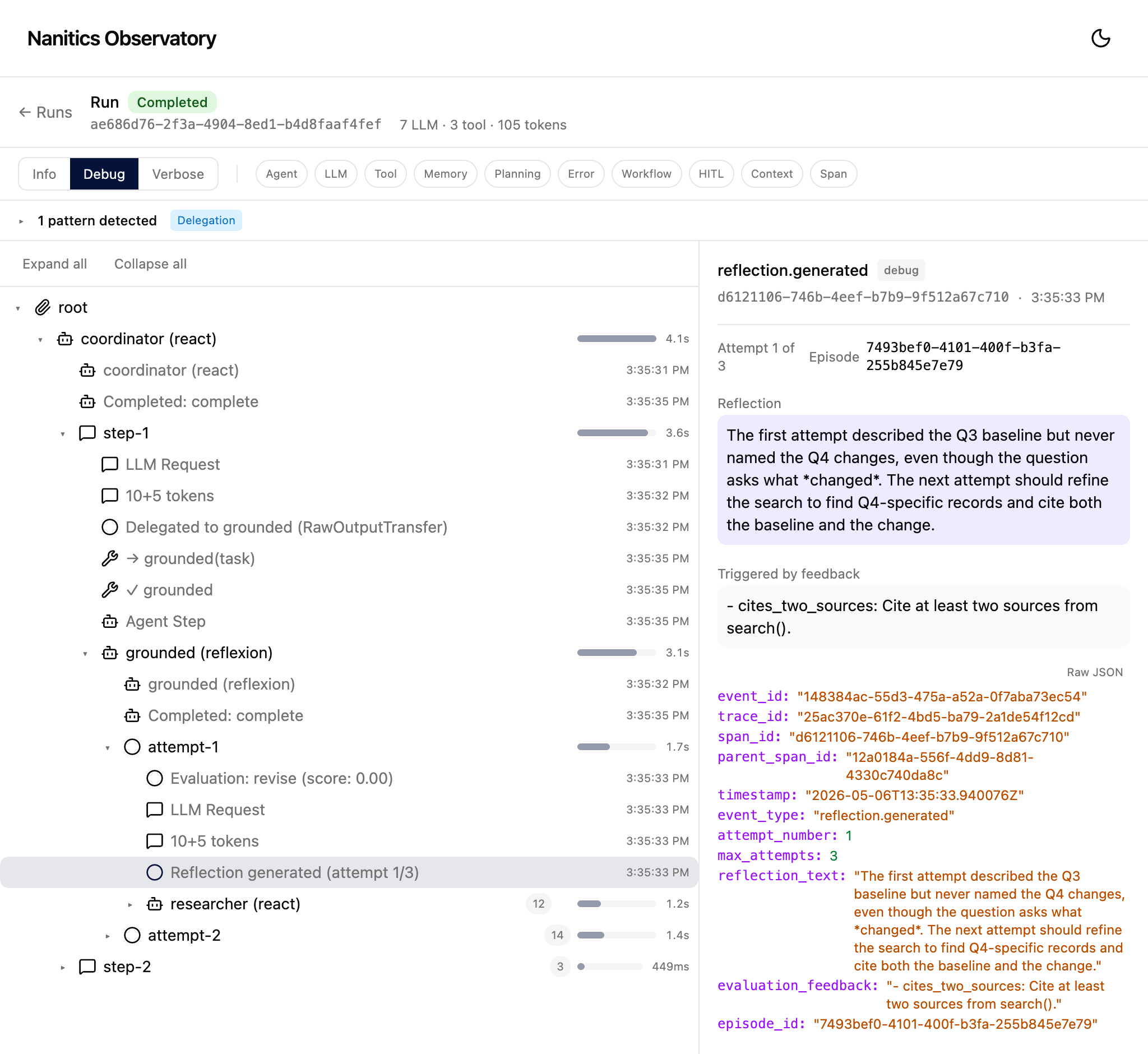

coordinator delegates to

grounded. grounded runs the inner

researcher twice; attempt 2 clears the predicate attempt

1 failed. The right pane shows the reflection generated between

attempts, alongside the feedback that triggered it.

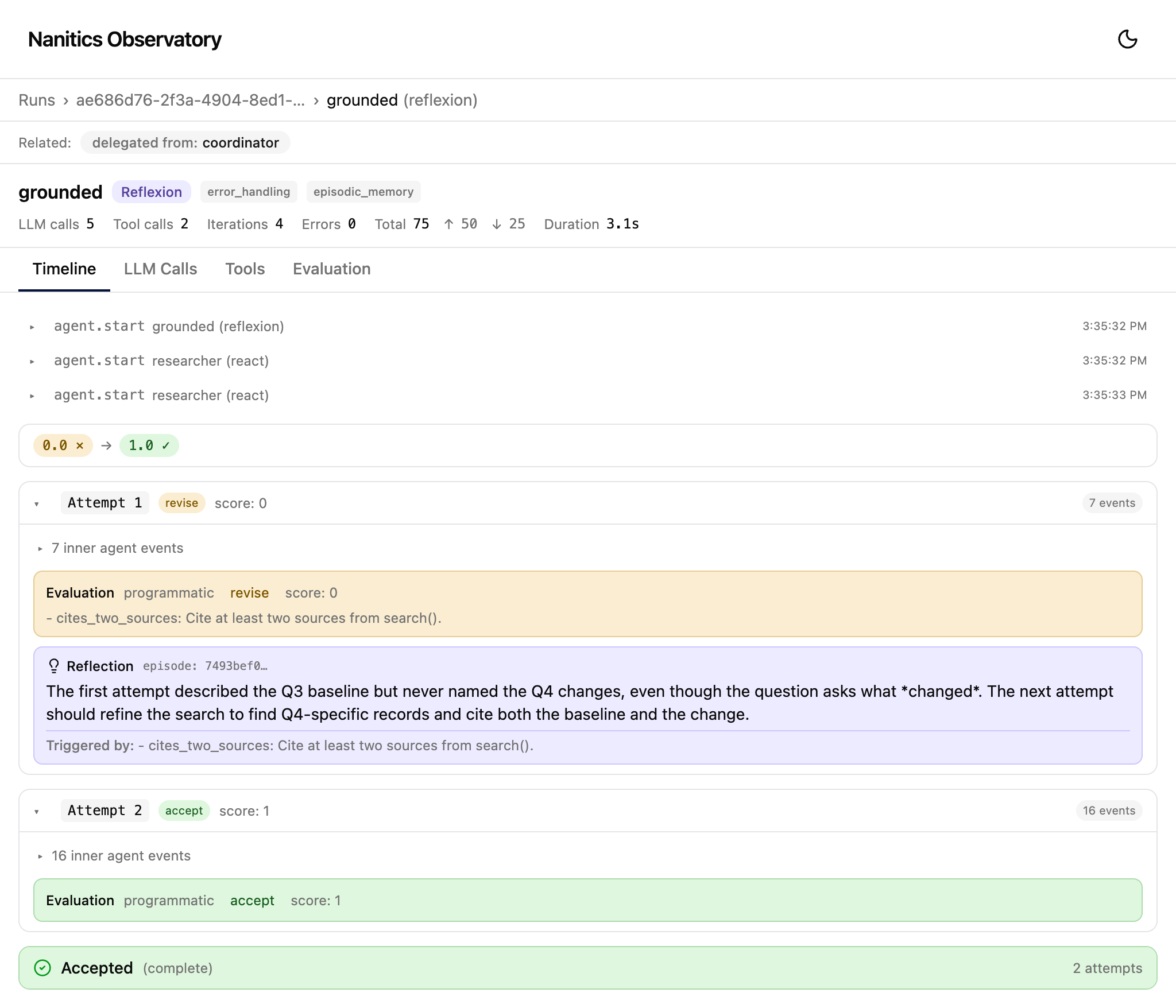

grounded. The header records

three LLM calls, two tool calls, four iterations, 3.1 seconds of wall

time. Attempt 1 evaluates to revise at score 0 and triggers a

reflection. Attempt 2 evaluates to accept at score 1. The timeline

below shows every event the agent emitted.

Nine groups of primitives.

-

Agent strategies

Seven built-in: ReAct, Reasoning, Reflexion, ReWOO, CodeAct, LATS, Tree of Thought.

-

Multi-agent coordination

Eleven patterns including handoff, supervisor, blackboard, debate, consensus, agent-as-tool.

-

Memory

Working, episodic, long-term, semantic. Pluggable stores.

-

Orchestration

Seven workflow shapes: sequential, pipeline, parallel, DAG, loop, map-reduce, conditional.

-

Evaluation

Programmatic checks and LLM-as-judge evaluators with retry-on-fail.

-

Human-in-the-loop

Approval gates, revision gates, durable suspension across processes.

-

Tools

Function tools, conditional tools, MCP tools, automatic schema generation.

-

Planning

Upfront and adaptive planning with goal tracking and plan-adherence evaluation.

-

Observability

Event-based tracing with the Observatory trace viewer.

Questions before adopting.

-

Is Nanitics production-ready?

Pre-1.0. The public surface is

nanitics.__all__. Breaking changes are documented inCHANGELOG.mdand follow the deprecation policy. The SDK matures through real applications, not speculative design. -

Which LLM providers does Nanitics support?

Anthropic and OpenAI clients ship by default. Mistral and LiteLLM are extras.

MockLLMClientsupports development and testing without an API key. -

What does "trace-first observability" mean in practice?

Every agent loop, tool call, evaluation, and coordination event emits a structured event. The Observatory trace viewer renders these as a hierarchical timeline. There is no separate tracing service to bolt on.

-

Can I use Nanitics without an API key?

Yes.

MockLLMClientmirrors real client behavior with scripted responses. Every example in the repository runs deterministically without network access. -

Does Nanitics impose a runtime, server, or framework?

No. Nanitics is a Python library. There is no runtime, no database, no web framework. You compose the primitives inside whatever application you build.

Install Nanitics.

pip install nanitics Apache License 2.0. Bug reports on GitHub Issues. Questions and proposals on GitHub Discussions.